Introducing "Serve Time": The Third Phase of Modern Application Delivery

Rodrigo

RodrigoFor years, we've organized our understanding of software delivery around two distinct phases: build time and runtime. Build time is when we compile, bundle, and optimize our code for production. Runtime is when that code executes in the user's environment—their browser, device, or server.

This mental model served us well in a simpler era. But as web applications evolved and edge computing matured, something important has been hiding in plain sight: there's actually a third phase that deserves its own name and our focused attention.

We call it Serve Time.

🏗️ The Evolution That Led Us Here

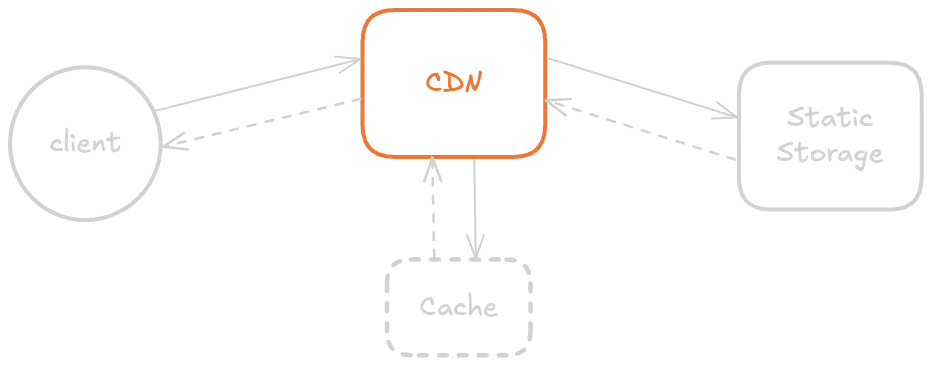

The story begins in the late 1990s when Content Delivery Networks (CDNs) first emerged to push static assets closer to users.

What started as simple caching has transformed into something far more powerful:

- 2002: Akamai coined "edge computing," envisioning computation at network edges

- 2017: AWS Lambda@Edge and Cloudflare Workers made edge functions practical

- 2019-2022: Major platforms launched edge runtime solutions, making distributed computation accessible to every developer

This wasn't just about caching anymore. We could suddenly run real logic—authentication, personalization, routing decisions—at the very edge of the network, milliseconds away from users.

⚡ Defining Serve Time

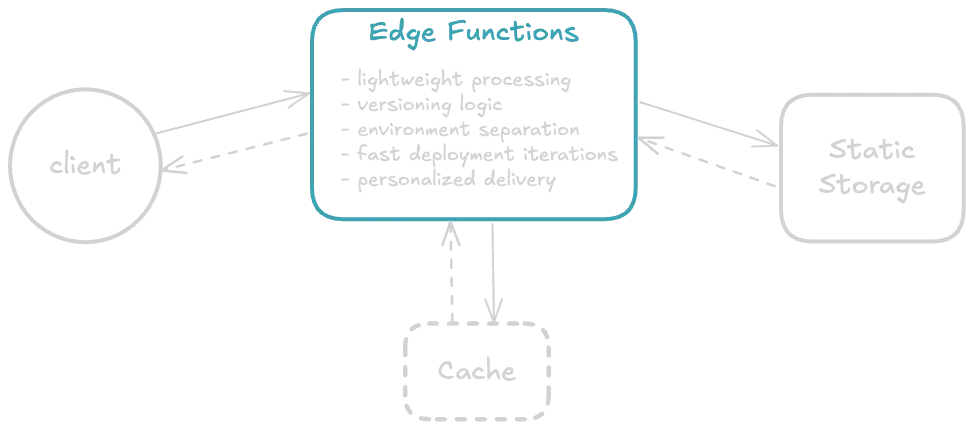

Serve Time is the precise moment when a request hits the edge and decisions are made about what to deliver—before a single byte reaches the client.

It's distinct from both build time and runtime:

- Build time prepares your code for deployment

- Serve time intelligently decides what to send and how

- Runtime executes that code in the user's environment

During Serve Time, you can:

- Route intelligently based on user location, device, or session data

- Personalize content without waiting for origin server round-trips

- Apply security filters before traffic reaches your infrastructure

- Control deployments with instant rollbacks and phased rollouts

- Optimize delivery through smart caching and compression decisions

🎯 Why This Phase Matters

Traditional architectures force you to make these decisions either too early (at build time, when you don't know who the user is) or too late (at runtime, after network latency has already impacted user experience).

Serve Time gives you the best of both worlds: real-time intelligence with edge-level performance.

Consider a practical example: an e-commerce site needs to show different product recommendations based on user location, time of day, and browsing history. In a traditional setup, this requires:

- Loading a generic page

- Making API calls to personalization services

- Re-rendering with personalized content

- Multiple round-trips and client-side processing

With Serve Time logic, the edge can:

- Analyze the incoming request

- Select the appropriate pre-built page variant

- Deliver personalized content in a single response

- All in under 50ms, regardless of geography

⚖️ The Tradeoffs of Edge Logic

Serve Time isn't magic—it comes with engineering tradeoffs:

Execution Constraints: Edge environments typically have memory limits, CPU restrictions, and shorter timeouts than traditional servers.

Distributed Debugging: When logic runs across hundreds of edge locations, troubleshooting requires new approaches and better observability tools.

Stateless Architecture: Edge functions are inherently stateless, so persistent data requires external stores or creative caching strategies.

Cost Considerations: While edge logic can reduce origin load, it adds its own costs that need to be balanced against performance gains.

These constraints aren't blockers—they're design parameters that push us toward cleaner, more efficient architectures.

🚀 Serve Time Powers Zephyr Cloud

Here at Zephyr Cloud, Serve Time isn't just a concept—it's the architectural foundation of our entire platform.

We use edge logic to:

Blazing fast deployments: Our edge-first architecture means your updates go live in seconds, not minutes or hours, with zero downtime.

Dynamic Version Management: Instead of traditional deployments that require cache invalidation and propagation delays, our edge workers dynamically route to the correct version of your application based on request parameters, user cohorts, or rollout percentages.

Instant Rollbacks: When something goes wrong, we don't rebuild and redeploy—we update edge routing rules to instantly redirect traffic to the previous stable version.

Multi-CDN Intelligence: Our platform abstracts away CDN complexity by making smart routing decisions at serve time, automatically selecting the best provider based on real-time performance data.

🧠 The Mental Model Shift

By naming and recognizing Serve Time as its own phase, we change how we architect applications:

Instead of thinking: "I'll build this once and runtime will handle everything else"

We think: "I'll build multiple variants, serve time will choose the right one, and runtime will execute it efficiently"

This shift unlocks patterns that weren't practical before:

- Per-user application versions

- Geographic feature rollouts

- Real-time A/B testing without JavaScript

- Progressive enhancement based on device capabilities

- Intelligent fallbacks that happen before the client knows anything went wrong

🌟 The Future is Serve Time

Build time and runtime remain essential, but neither captures the intelligence layer that modern applications require. Serve Time fills that gap.

As edge computing continues to mature, the applications that thrive will be those designed with all three phases in mind: building for flexibility, serving with intelligence, and executing with performance.

The web's future isn't just about faster builds or smarter runtimes—it's about making better decisions at the moment that matters most: when content meets user, at the edge of the network.